Building with Neurons

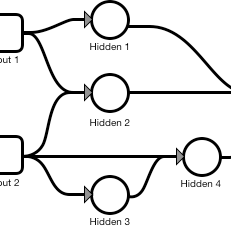

Coding the smallest possible neural network

and explaining the math that makes it work.

Coding the smallest possible neural network

and explaining the math that makes it work.

In Chapter 1, you build the smallest possible neural network alongside clear explanations for the math that makes it work.

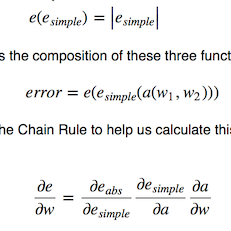

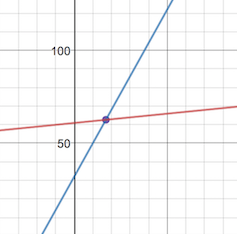

Derive the formulas that make neural networks work and translate them into code. No shortcuts; all of the math is here and easy to follow.

Separate the required building blocks of neural networks from the optimizations that make them scale.

As I was first learning neural networking, I struggled to separate the math that's required

with the math that's an optimization. This book is a concise yet comprehensive explanation

of the math behind neural networking alongside its matching code.

- Adam Wulf